The AI-Driven RAM Crisis Explained (Part 1) | 22/04/26

A beginners guide on how all of this was allowed to happen.

The AI and Games Newsletter brings concise and informative discussion on artificial intelligence for video games each and every week. Including industry news, innovative research, emerging trends, and our own exclusive editorial and reporting.

You can subscribe to and support AI and Games, with weekly editions appearing in your inbox. If you’d like to work with us on your own games projects, please check out our consulting services. To sponsor, please visit the dedicated sponsorship page.

Hello and welcome to this week’s edition of AI and Games. For this issue, I wanted to pick up on a reader-voted topic from earlier in the year, and start taking a crack at it. We’ve seen a lot of news stories of late about the impact that AI is having on gaming hardware, with everything from the PC components to games consoles and even the impending Steam Machine seeing price increases as a result.

This week it’s a high-level overview as to why this is happening, but going into it in a little more detail than most other outlets. I wanted to paint a more accurate picture of why this is happening, how it was foreseeable, and why it is not an easy fix.

Follow AI and Games on: BlueSky | YouTube | LinkedIn | TikTok

More AI and Games Conference 2025 Recordings

Before we get into the meat of the issue, we have more conference talks for you! Following the release of presentations from BitPart.ai and Havok last week, two more talks from last year’s event just dropped on our conference YouTube channel this morning.

Predicting Combat Outcomes in Total War

James Kwan and Miguel Lopez-Bachiller, Creative Assembly

One of the features of Total War games is the ability to auto-resolve combat: rather than have two players fight it out, the system can simulate the outcome. This is a useful feature both for players, as well as the in-game AI, given they can use the outcome of the simulation to decide whether to even engage in conflict. In this talk we hear about a new approach to improving the auto-resolver using machine learning. By collating a large amount of data by having armies battle it out, it helps create a more accurate prediction model based on how they actually performed across a variety of conditions.

When Research Meets Release Dates: Production-Grade Reinforcement Learning for Games

Wes Kerr, Riot Games

We hear a lot about how to use machine learning, notably reinforcement learning, as a means to enhance gameplay. But the reality is it’s quite difficult to do that during the ongoing development of a game. We hear from Wes Kerr, who leads the Tech Research team at Riot Games as he highlights the lessons learned from working at the intersection of research and live games. How safety, evaluation, and reliability are paramount when you’re working on ever-evolving and player-facing content. Plus we get some insight into lessons learned in this approach as the team opted to try a different approach for the creation of bots for Riot’s fighting game 2XKO.

The AI Ram Crisis Part I: How Did We Get Here?

In recent months we’ve seen much discussion online, be it on this newsletter or elsewhere about AI’s impact on the cost of computing, and what this is doing to the production and retail costs of consumer products. This surge in pricing, which has been nicknamed the RAMpocalypse, RAMmageddon, and much-more besides, stems by-and-large from the surge in business activity surrounding (Generative) AI.

This have seen significant impact across the gaming space. Consoles such as the Sony PlayStation and Microsoft’s Xbox Series S|X have seen prices increase first in late 2025 and again in 2026 as a combination of both component costs and previously the US tariffs. The Nintendo Switch 2 has not seen a price increase yet, but peripherals did receive a price adjustment in the United States at launch as a result of the tariffs issued at the time. Valve’s Steam Machines have been delayed given the price tag has been hard for them to nail down. Meanwhile just last week, the Meta Quest 3S and Quest 3 VR headsets saw an increase of $50USD and $100USD, with the “the global surge in the price of critical components - specifically memory chips” being the root cause.

The surge in pricing is largely being triggered by

investment currently being put into Generative AI

But it’s not just the consoles that are suffering, if anything it looks much worse when you look elsewhere. The same chip shortages are resulting in price increases for memory in your computer, combined with GPUs and solid-state-drives (SSDs). Leading not just to inflated costs for upgrading your computer, but also it has a trickle-effect for other consumer products such as laptops, smartphones, and virtually every consumer-grade product that needs chips for core functions. It’s so bad that Nvidia are planning to re-release old GPUs because they’re cheaper and easier to manufacture in the current climate.

As is well-known, the surge in pricing is largely being triggered by the significant amount of investment into Generative AI. However, it might not be obvious why exactly this is happening.

So for this edition, it’s the first of a beginner’s deep-dive into the issue, we’re going to talk about:

What are the shortages that are actually occurring?

Why is this happening?

How did this situation even come to pass?

Then in a follow-up issue we’ll dig deeper into what the future looks like, and how this may potentially resolve itself, and the bigger industry challenges this presents going forward.

The Problem Statement

So let’s start by getting into what the issue is, and how this manifests. It all starts with the chips used in the manufacturing of specific computing components, and then it’s the AI industry’s interest in them that has caused the supply chain issue.

What Exactly is in Short Supply?

As we explained a moment ago, the issue is appearing in a variety of consumer products, and most notably in PC and gaming hardware, but it all stems from a single resource: the manufacture of memory chips for computers.

If you’re not familiar, memory for computers comes in two forms: volatile and non-volatile. The former is memory that only maintains information when an electrical current is passed through it, while the latter will persist over time. So volatile memory covers things like Dynamic Random Access Memory (DRAM) that used in a computer or games console to allow us to store information about the game temporarily so it can then be used thereafter. Meanwhile non-volatile memory is typically NAND or ‘flash’ memory, which is used in the likes of SD cards, solid-state hard drives, and the memory on devices like smartphones.

Both DRAM and NAND memory come in all different shapes and sizes, and over the years chip designers have built a variety of permutations with different storage capacities, data transference rates, and other costs. You’ll find forms of both types of memory built to support the likes of mobile devices, consumer-grade PCs and games consoles, but also cloud computing and server infrastructures. Plus it’s worth mentioning that even the likes of CPUs and GPUs used for both core logic processing and graphics rendering on these devices use smaller numbers of these memory types to handle specific aspects of their execution such as Cache Memory, which is very short-term memory that is very fast and connected right to the CPU for when it is doing any type of calculation.

Now the thing is, High-Bandwidth Memory used in AI re-uses DRAM traditionally used in consumer hardware.

However for the most part, the average person will have noticed the discussion around memory is usually focussed on consumer-grade PC specifications and games consoles. Over the past 15+ years the big ongoing development in consumer memory is what is known as Double Data Rate or DDR RAM. Throughout much of the past decade DDR4 was the dominant memory on PCs throughout the mid-2010s, with the Xbox Series S|X using GDDR6 (a variation used to support GPU processes) and the PlayStation 5 uses a mixture of DDR4 and GDDR6 - with the PS5 Pro also having a smidge of DDR5 in there. At the time of writing it is still rather common for computers to use DDR4 or 5 for ‘traditional’ compute processes , while GDDR7 is the hot commodity, and is commonly used for graphics rendering and, oh yeah… AI.

What Does AI Have to Do With It?

So yes first and foremost GDDR7 is something of use to AI, given it’s designed to allow for fast data processing. But the real issue isn’t in GDDR7 but what we call High-Bandwidth Memory (HBM), which is designed for use in data centres, or in things like Nvidia’s dedicated AI compute devices such as the H100 where it’s integrated into the chip alongside a GPU.

Now the thing is, HBM essentially re-uses traditional DRAM technology, but it’s just built differently. It sticks DRAM into a vertical stacking setup that allows for - as the name implies - incredibly high bandwidth of data processing. A traditional HBM3, which has around 8-12 stacks of DRAM in it might have a smaller raw capacity than DDR5, but it will 10x-15x the throughput, and this allows for it to be used in processing for Generative AI models, which pump through hundreds of GB of data very quickly during both training and real-time execution (aka inferencing).

Right now everyone from OpenAI to Nvidia, Microsoft, Oracle and more are pushing for the investment in AI data centres that help with their rolling out of their tools and services. There are even concerns from these companies that data centres are not being built fast enough. Though what market actually exists for these data centres has yet to be properly defined, and is rather unclear.

I’ll refrain from editorialising at this stage as to the worth or need for these data centres, and the shady financing going on to get these projects off the group. But for now let’s just deal with the reality that this is happening, whether we like it, or want it.

The WHAT: TLDR

So what is happening really boils down to the following:

As the hype for Generative AI continues, so too is the demand for large-scale AI datacentres.

As more and more datacentres are built to host large-scale AI models, it needs a lot of HBM to function at the scale suggested by Generative AI such as GPT

The increased need for HBM memory consumes the available DDR memory in the market.

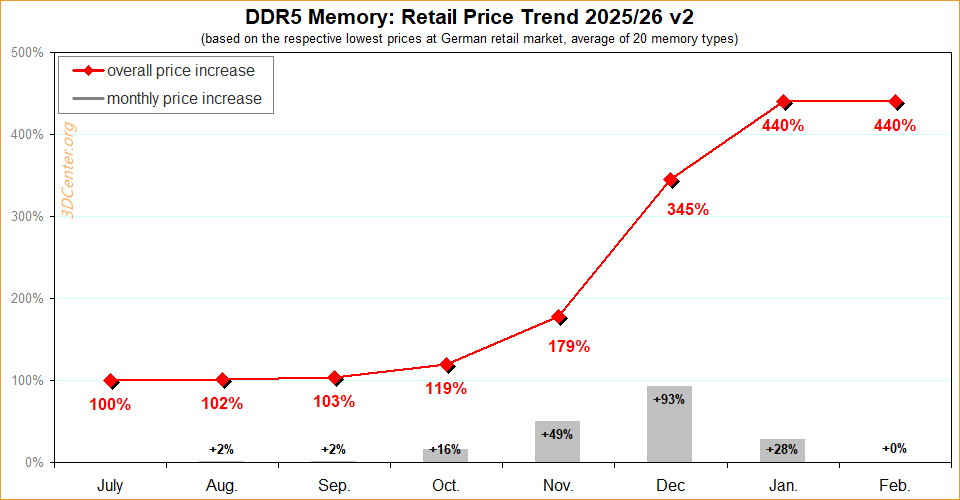

In the past year we’ve seen the cost of traditional hard drives, SSDs, GPUs, and DDR RAM increase significantly as a result of this bottleneck that is emerging, with DDR5 RAM in particular shooting up by more than 400%.

Why Is This Happening?

Having covered the what, let’s get into the why. Why is HBM consuming the supply chain market? Well in order to do that, we need to spend a little bit of time discussing the how. How semiconductors, the micro-level components used in the likes of DRAM and HBM, are actually built.

Semiconductors are built courtesy of what are known as fabrication plants or ‘fabs’. These plants are incredibly complex and expensive facilities designed to etch electronic circuits onto semiconductor wafers - typically made from silicon - at an unbelievably small scale. For context most wafers are millimetres in size, with circuiting etched into them at microscopic levels. Since the boom of semiconductor manufacturing began in earnest in the 1960s, we have seen the size of individual transistors used as part of circuitry reduce from the micrometres (i.e. thousandths of a millimetre), to nanometres (i.e. thousandths of micrometres), with contemporary transistors manufactured at around 7 nanometres and smaller. By comparison, nucleotides that compose DNA in organic life are around 1.5 nanometres. That’s how small we’re dealing with here.

There are fewer than 100 fabs worldwide that manufacture cutting-edge chips.

Now I bring this up because as you can imagine, this is a highly complex and expensive proposition. Etching silicon - the part of using lasers at precise microscopic sizes to create the circuits - is but one of many steps taken to ensure the final chips are going to work and are of suitable quality. On average it takes 10-15 weeks for a chip to be made depending on the size of the etching as necessary cleaning and preparation of the silicon is required. Plus there is extensive testing of individual transistors before stitching them into more complex chips. The subsequent creation of the complete product, which often is sticking dozens of fully manufactured chips together, can take months if not longer. All of this requires a huge amount of resources, both in terms of human expertise, but the amount of hardware required to manufacture at this level of precision. The number of companies that manufacture specific components needed for a particular process, notably in areas of photolithography (the lasers that draw circuits onto the silicon), are often in the single-digits - and cost tens if not hundreds of millions of dollars.

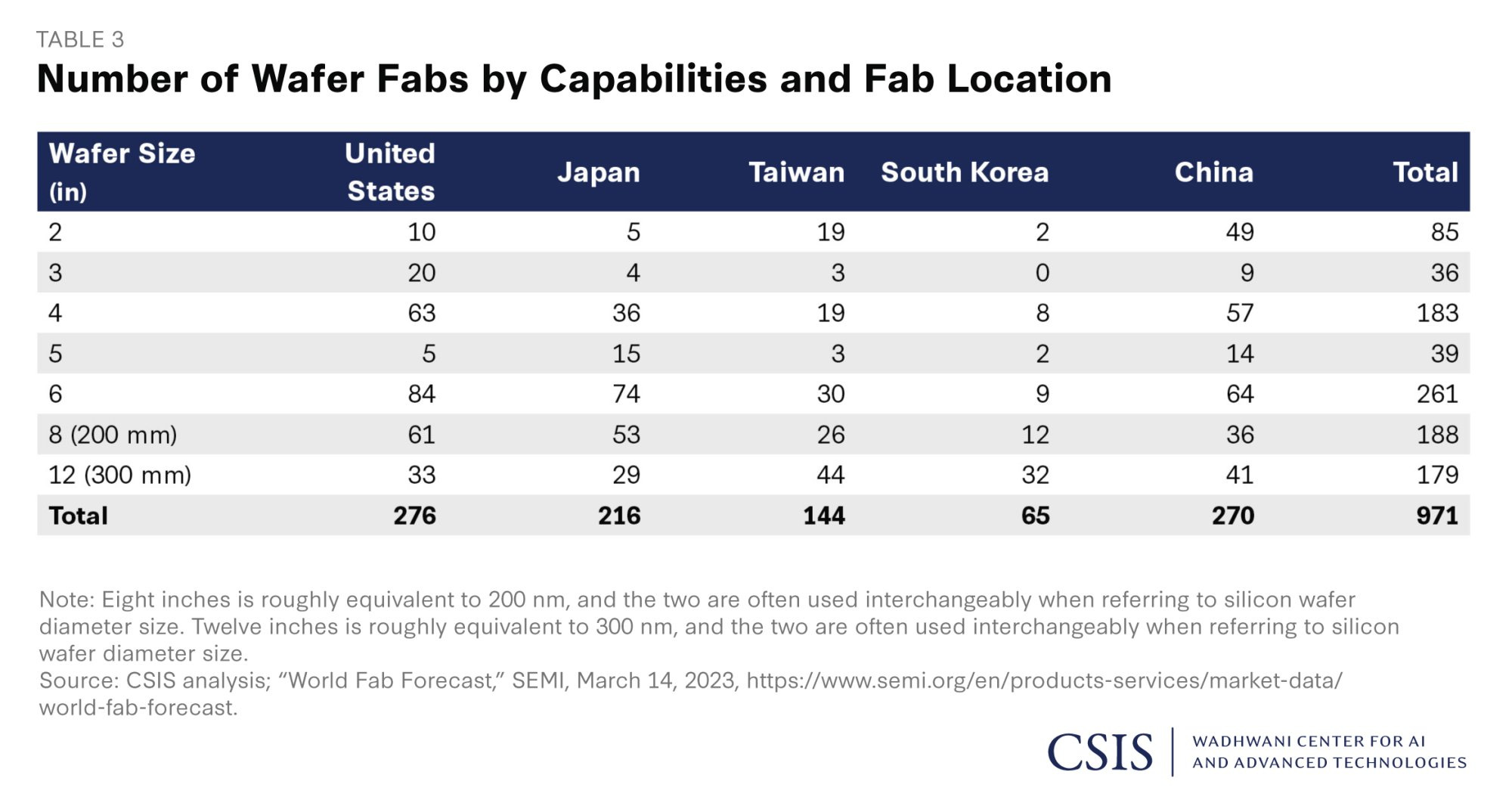

As a result, the number of places that can actually manufacture chips at different wafer sizes is incredibly small. From data produced back in 2023, there are just over 1000 chip fabs worldwide, though they are often specialised against specific wafer size and composition. As we can see in the table above, there are less than 100 fabs in the world that can manufacture chips on wafers that are 2 inches in size. So if you’re seeking to manufacture these chips, your options are even more limited than operating on larger sizes. Though it’s worth stressing the most common wafer sizes for contemporary PC chips for the likes of CPUs and GPUs are in the 8inch/200mm - 12inch/300mm region.

In addition, the vast majority of these fabs - regardless of wafer production size - are based in Asia. While the concept of the semiconductor and much of its initial development and manufacturing started in Silicon Valley in the United States - which is how it got its name - much of fabrication was offloaded to Asian markets in the 1970s. This has led to Taiwan becoming a global powerhouse in semiconductor manufacturing. The Taiwan Semiconductor Manufacturing Company (TSMC) is not just the largest non-US company in the world (worth ~25% of Taiwan’s GDP), but it controls around 70% of the global market share for semiconductor chips.

Fabrication is the Bottleneck

TSMC has developed a leading edge in the space given it doesn’t design chips, it’s sole focus is in fabricating them. This helps cement TSMC’s position as the world’s leading chip fabricator, because they’re not in competition with chip designers, they’re simply helping them achieve their ambitions.

As I mentioned, most US-based companies offloaded their fabs between the 1970s and 1990s as a means to cut costs, given they knew it was more cost effective to outsource to factories in Asia rather than maintain them in the United States. The prospect of a significantly cheaper yet equally skilled labour force was all too alluring. Hence in the modern day, many have focussed solely on becoming chip designers - referred to as ‘fabless manufacturers’. This means that the vast majority of major chip companies you associate with computing don’t actually make their own chips. This includes the likes of:

Nvidia: Famous for their gaming GPUs and more recently their AI NPUs like the H100.

AMD: Nvidia’s competitor who are increasingly involved in designing chips for games consoles such as as the PlayStation and Xbox.

Apple: The chips made for the Macbook, iPhone and iPad are designed by the tech giant, but manufactured by TSMC.

Arm: Creators of CPUs for mobile devices that are used in Raspberry Pi’s, Samsung Galaxy smartphones, and even the Nintendo Switch 2.

Qualcomm: The designers of the Snapdragon chipset, used in the likes of the Meta Quest 3 VR headset.

At the time of writing, Intel is the only major computer/gaming chip manufacturer that actually owns chip fabs, and runs around 15 different sites around the world. Decades ago this was frowned upon as a huge cost for them, and has since proven to be less of a liability and more of a strength.

The same can’t be said for memory. While there are only a handful of companies in the world that produce commercial-grade NAND memory, the big difference is that almost all of them operate their own fabs:

Samsung: While many will know them for their consumer grade products, Samsung is one of the largest and most successful (Arm-based) CPU and NAND fabricators in South Korea (with sites in United States too).

Kioxia: A spin-off from Toshiba (where it was originally called Toshiba Memory), it was the company that created NAND memory in the 1980s, and is one of if the largest producers of RAM and solid-state drives in Japan.

Western Digital: While they have a handful of sites in the US, the bulk of their manufacturing occurs in Asia, but they don’t run their own fabs. They collaborate with Kioxia in Japan instead.

Micron Technology: Hosts a variety of fab sites across the US, Taiwan, Japan, Singapore and China.

SK Hynix: Another major South Korean NAND manufacturer with fab sites in their home country, plus in China and the US.

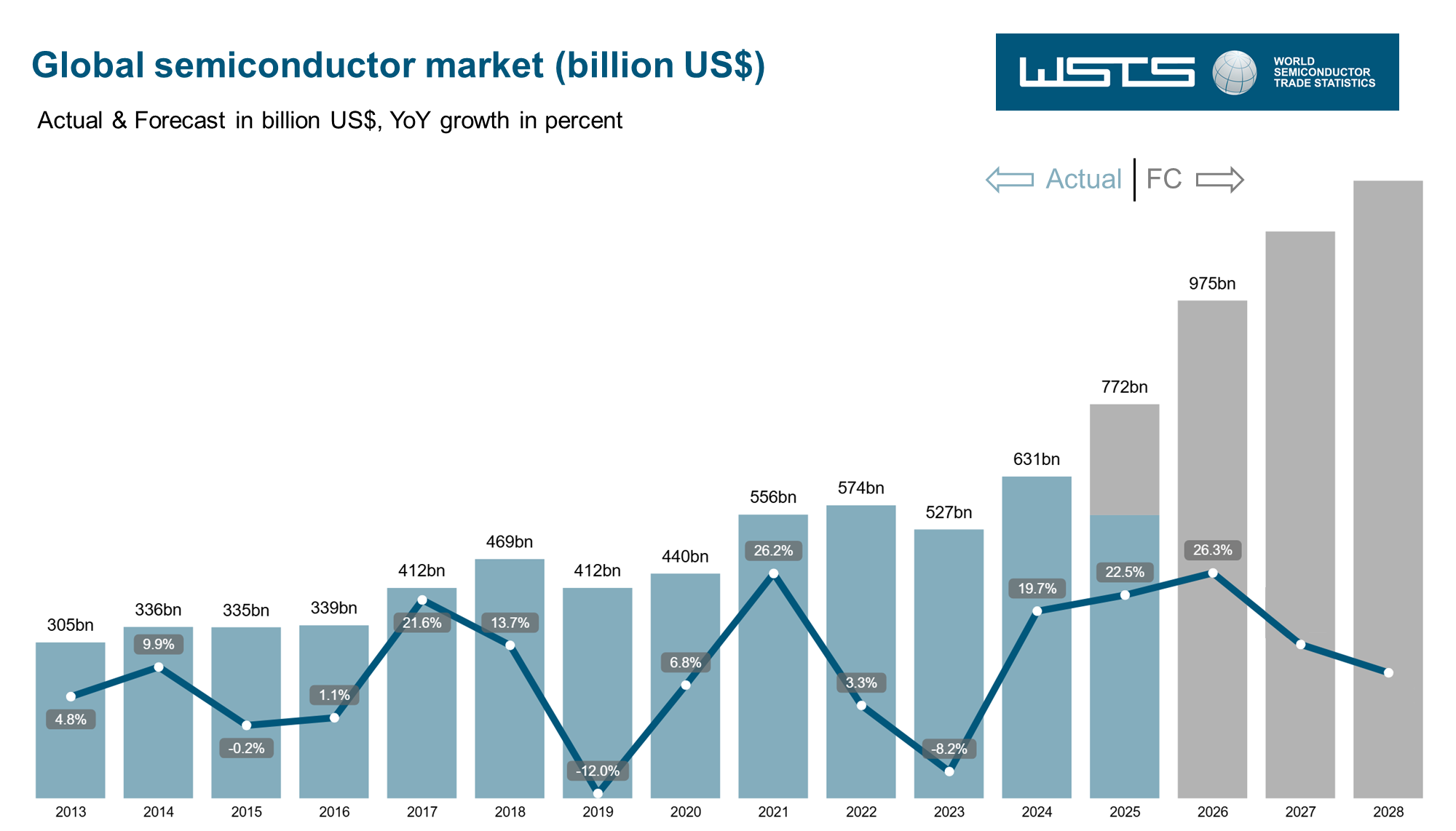

Now to give you an idea of the scale of manufacturing, as far back as 2021 it was suggested semiconductor sales reached 1.15 trillion units, with over $570 billion in sales. Yet the whole thing is largely driven by a comparatively small number of businesses.

One critical aspect to raise here, is that the distribution of fabs predominantly in Asia has become a huge sticking point both in terms of international logistics, but in political motivations and action. Many western governments, notably the US, are concerned that their tech industries - and especially their military - are heavily reliant on foreign agencies in constructing these vital resources. This has had an impact notably in the United States fiscal policies and military strategy. While there has been much bluster from the Trump administration about the need to bring manufacturing jobs back to the US, it was the Biden administration that signed the ‘Chips and Science Act’ in 2022 to make it happen. This bill introduced $280 billion of investment that helped to, among other things, build fabs in the United States. TSMC then jumped on this bandwagon, having initially declared a desire to build a fab site in Arizona in 2020, and then broken ground in 2022 having received financial support from the bill. This new fab kicked into production in 2025, and before the first production line was even assembled, Apple had signed up to become its largest customer. Meanwhile, the US Navy continues to have the Seventh Fleet primed and ready from its operating base in Japan in the event that China could attempt to take the Taiwan Strait. It is recognised by the US government and military that Taiwan is a critical US asset, and there are very real fears that China could starve the western world of chip manufacturing capabilities should they invade and assimilate the country. This is still to this day an issue that influences their actions within the Pacific theatre.

The WHY: TLDR

The important thing to takeaway from this segment is that:

Fabrication of the components needed for DDRAM and HBM is incredibly expensive and time-consuming.

The lead times on this are staggering, with weeks taken to manufacture the silicon, complete chips can take months at a time.

The number of available fabs in the world is frighteningly small for the amount of chips being manufactured.

How Did This Happen?

So bringing it all together, what do we have?

A consumer market that requires specific types of memory for electronic products, especially in PC and gaming.

An AI industry whose memory requirements consumes consumer-grade memory resources during manufacturing.

Large-scale industry investment in AI datacentres, thus increasing demand for HBM memory.

A fabrication market that has significant supply chain bottlenecks given how few companies can actually built the memory needed.

And all of this then brings us to the all important fact: AI companies pay a premium for the HBM memory.

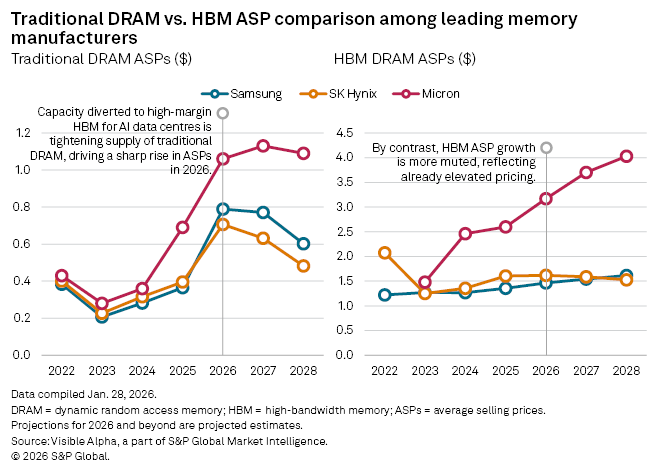

This creates a dynamic where it is more financially lucrative for the fabrication companies to divert their resources towards manufacturing HBM rather than DRAM. Thus creating a situation where consumer grade memory reduces in supply, and therefore manufacturers either charge their existing supply at higher prices for pure profit, or have to absorb costs of competing for limited fab space, which also increases the price.

This has led to a number of DRAM manufacturers, notably Micron, to declare that they’re divesting from the consumer market. They can make significantly more money by building HBM for AI companies than bother with the little people. All in pursuit of profits based around manufacturing of datacentres we still don’t really know if we’ll even need.

Meanwhile there are additional factors that could further increase cost. For one there is the cost of resources, be it precious materials used in chip manufacturing, but also in fuel. There has already been issues with precious metal acquisition that combined with COVID lockdowns heavily affected chip production in the early 2020’s. Meanwhile in the present day the ongoing conflict across the Middle-East is a significant cause for concern in the chip industry. The ongoing air and missile strikes by the United States and Israel on Iran and neighbouring countries leading to the closing of Strait of Hormuz, and the transit of fuel, is only adding to these complexities. While most countries driven heavily by fab-based industries, especially Taiwan, retain stockpiles of key materials, they typically have limited fuel reserves. TSMC accounts for up to 10% of all of Taiwan’s energy consumption, and as an island nation it relies heavily on imported fuel, typically with only a week or twos supply at best. Hence should the Strait of Hormuz continue to remain shut through the summer, things could get even worse as fuel prices raise and scarcity impacts their production lines.

A Manufactured Failure

With the facts laid out as they are, the one thing to really take away from this is how this was entirely foreseeable by the people that caused it. As we mentioned already chip fabrication has a long lead time, and companies engage with the fabs early to ensure supply chain will not be disrupted as they seek to execute on new projects. Hence many of the companies involved knew that their heavy investment in HBM would starve the consumer market as far back as late 2024.

Meanwhile companies are only going to starve the remaining market until the HBM boom dies down. Most recently news came of Apple seeking to swallow up as much of the mobile DRAM market - even potentially at a loss - so as to avoid letting competitors impact their market share. By comparison, the likes of Qualcomm are slashing production budgets for specific chips, while Samsung are increasing prices for devices (even in South Korea).

In Part 2…

So with part one in the bag where we explain how we get here, we’ll dig a little more into the future of the situation in a later issue. In part 2 we will discuss:

What efforts are being made to mitigate this, if any?

How soon could this turnaround, if at all?

The status of the AI data centre rollouts.

What about the building of new fabs in the US?

New research in AI developments that could upend the situation.

Wrapping Up

Wooft, well… that was upbeat! But hopefully you have slightly clearer idea of a lot of the realities behind why this is all happening. We’ll get back to a slightly more fun and cheery topic next week, I hope, before returning to part 2.

Thanks for reading and catch you all soon.