That DLSS5 Reveal Will Have Long Lasting Damage for Games | 01/04/26

The interesting stuff in DLSS 4.5 was completely drowned out by you know what...

Game AI’s Existential Crisis is Now on YouTube

Japanese Indie Dev Gets Ripped Off Thanks to Gen AI

Warhorse Studios Replace Translators with AI Tools?

Tommy’s Take on the DLSS5 Debacle

The AI and Games Newsletter brings concise and informative discussion on artificial intelligence for video games each and every week. Including industry news, innovative research, emerging trends, and our own exclusive editorial and reporting.

You can subscribe to and support AI and Games, with weekly editions appearing in your inbox. If you'd like to work with us on your own games projects, please check out our consulting services. To sponsor, please visit the dedicated sponsorship page.

Hello everyone, welcome to this week’s edition of AI and Games. I’ve been busy these past two weeks reporting on the AI situation from GDC, as well as completing the Game AI Existential Crisis essay, so I figured let’s do a shorter edition this week catching up on the news, and then spend a few minutes giving my own take on the long-lasting damage that will stem from that god-awful DLSS5 reveal.

Don’t worry, no April’s fools around here. We’re all about consistency around here.

Follow AI and Games on: BlueSky | YouTube | LinkedIn | TikTok

Announcements

Couple of quick things to get in you

Reminder: CoG Industry Day Submissions Close April 10th

As mentioned last week, the submissions for talks and demos to the industry track of the IEEE Conference on Games 2026 in Madrid close April 10th.

Visit the website for more info.

New on YouTube

Having just finished writing the series in the newsletter, I figured it was time I started translating it across to our video channels. Part 1 of Game AI’s Existential Crisis is now live on YouTube as video essay. It’s a shorter and slightly re-written version of the original shared in the newsletter, but still comes in at a whopping 50 minutes in length.

AI (and Games) in the News

A quick round-up of a bunch of news stories that have cropped up in the past two weeks.

Remember When We Trained AI to Play Games? Well LLM’s Suck At It [IEEE Spectrum]

I wanted to give a shout-out to my academic friends as Georgios Yannakakis and Julian Togelius whose recently published paper on LLMs trying - and failing - to play video games successfully is an interesting regression in AI research versus some of the work happening in games-based artificial general intelligence (AGI) around 10 years ago. Perhaps an issue of the researchers digest on this paper? Let me know if you fancy it.

Sony AI Develops AI Models That Protect Copyright? [Automaton]

The long and short here is Sony AI have tried to develop a generative model that can create AI-generated outputs that respects copyrighted material. Protective AI (PA) as they call it is essentially an AI model that is still trained on the copyrighted material, but it has it baked into the training process that it needs to avoid generating outputs that look like it. While still in development, it suggests the solution to having stopping AI creators sticking artists work into their model so it can reproduce it, is to stick the artists work into the model so it does not reproduce it.

This reads like a sensible idea from a research perspective, but I doubt it will have much traction in the real world.

Unity Shuts Down IronSource To Pursue AI-Powered User Acquisition [GamesIndustry.biz]

Remember back in 2023 when Unity merged with the ad network IronSource? No? Well nobody liked it. Everyone was unhappy about it at the time given it lumped the Unity game engine with a the rather annoying and predatory practices of a mobile-ads company. So good news, they’re shutting the whole thing down later this month.

Why? Well as Jon Hicks reports over on GI.biz, the real real revenue growth is coming not from ads but AI-driven user acquisition. This is achieved courtesy of the ‘Vector’ platform that Unity launched back in 2025.

Japanese Indie Dev Has Work Ripped Off Thanks to Generative AI [Automata]

So here’s something I’ve been warning about for years: if developers are continually sharing their work online as they develop their projects, what’s to stop some shitty individual from taking your work and cloning it using generative AI?

Well this was proven right for me last month after Japanese indie developer and YouTuber Kamaboko had been caught out after their work on their game ‘Typing Magician’ was cloned and reproduced by another user. Having shared their work on the Unityroom site, another user then posted their own version of the same game achieved using AI tooling.

I mean the person who did even shared how they did on YouTube. One for all of you out there that have some experience in Japanese!

Kingdom Come Deliverance 2 Language Editor Replaced by AI? [PCGamer]

Warhorse Studios Kingdom Come Deliverance 2 is a beast of an RPG, and it requires a lot of effort to get all of the dialogue written up and translated into various languages. So it’s rather disheartening to see this post emerge on r/kingdomcome on reddit in which Max Hejtmánek, who was previously hired to as a Czech to English translator for the game was fired last week since the studio felt using AI for translation would “make the company more effective”.

Sadly translation is one of the areas most heavily hit by AI in many business and creative sectors, even though it’s nowhere near as good in practice as a human expert. You would think a games studio that focusses heavily on delivering a high quality RPG experience would know that?

Crimson Desert Team Convinces Nobody in Saying AI Art Was Placeholder [IGN]

That TikTok game you’ve never heard of until two weeks ago Crimson Desert has came under fire for being full of AI-generated art assets despite having no AI disclosure on Steam at the time of launch. Since then developers Pearl Abyss came out with a waffling and unconvincing statement that suggested these were placeholders from early AI experimentation, convincing absolutely nobody. Oh and the Steam disclosure was adapted, thanks guys!

Brief Op/Ed: Nvidia Completely Misses the Mark with DLSS5

It’s been over two weeks now since the unveiling of DLSS5 at Nvidia’s GTC event, and naturally I’ve been busy writing other things, but also I had refrained from getting involved into I’d heard and seen more of what’s going on here.

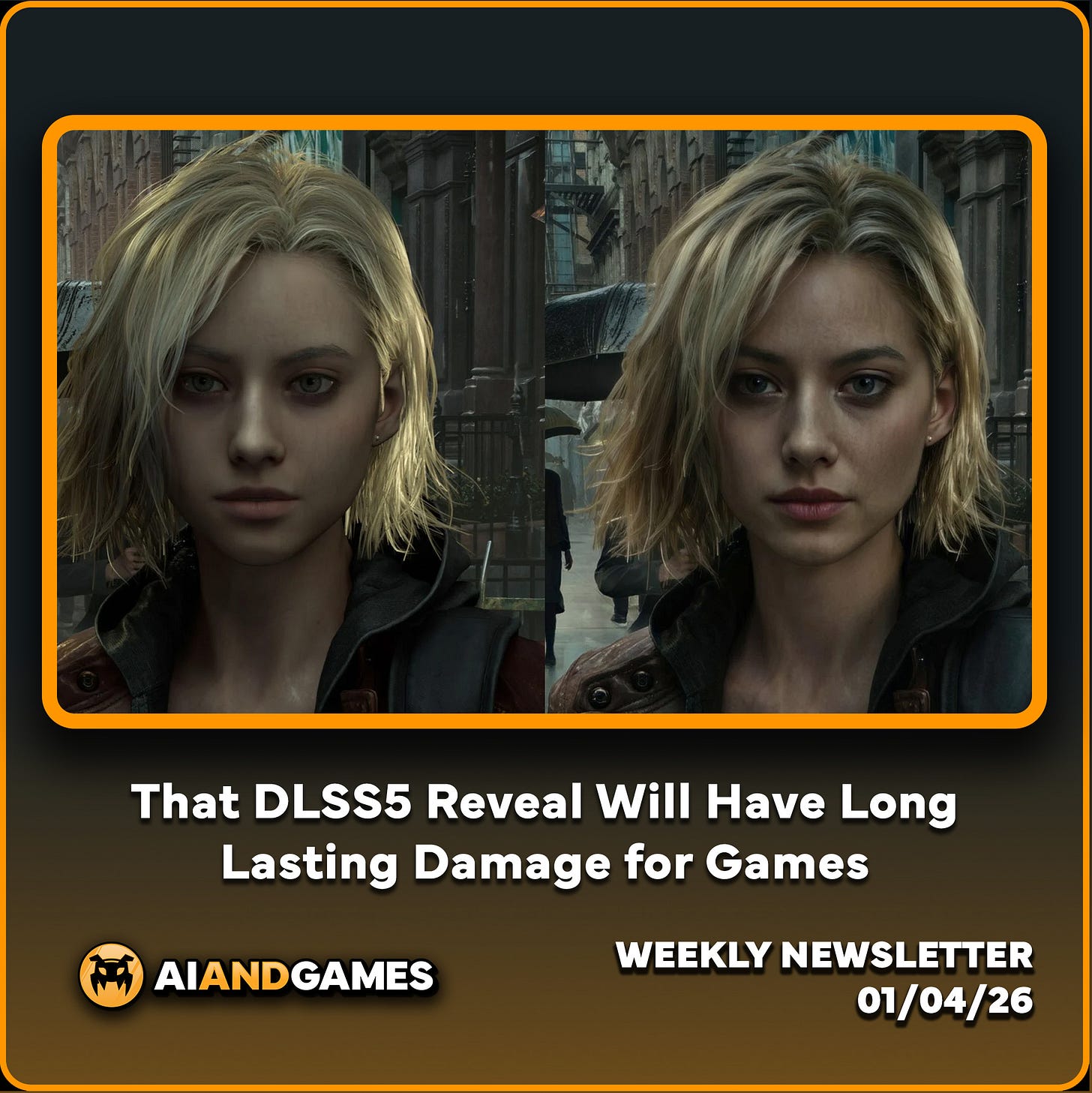

If you’ve somehow missed all this furore since it was announced - first of all lucky you - Nvidia announced the latest version of their AI-upscaling technology DLSS was showcased at their GTC event in San Jose and it has been widely condemned - and rightfully so - for fundamentally failing to grasp what value this technology has within the games industry. The new version has introduced a new lighting rendering pass, plus the controversial neural face renderer - that we reported on when it was announced in January of 2025 - that received a lot of criticism when it was first announced given it was prone to beautifying faces akin to an Instagram filter (i.e. yassified), as well lightening darker skin tones.

As you can see from the video above, the attempts to use both aspects of this updated tech look awful in that video. The faces aren’t consistent, the lighting is washed out, the faces look waxy and overly lit, and of course it also fundamentally changes how the game looks versus how it was intended. It has rightfully been trolled on social media, with the video above sitting at over 2.3 million views, with only ~16% likes compared to more than 100k dislikes. Not to mention the comment section eviscerating this idea. But hey, Nvidia’s stock only dropped a mere 0.3% on announcement, so that must mean it’s good right?

I have a sneaking suspicion someone’s been drinking their own Kool-Aid.

The Unspoken Agreement on DLSS

DLSS has since its inception been focussed on increasing the overall rendering quality of a game, and this is where DLSS5 not only drops the ball but highlights Nvidia’s arrogance over the issue. DLSS 1 and 2 were focussed on trying to increase resolution of rendered games by taking in lower-quality versions of the rendered frame and then outputting a higher quality version. So in essence, you feed in a 720p image to get a 1080p one, or a 1080p frame for a 2160p render. Ultimately you get the graphics card to do the rendering work, then the AI upscaler works to increase the size and pixel density of the image. This was then enhanced in DLSS3 with frame generation, meaning it would also attempt to figure out intermediate frames akin to how video rendering works. I actually made a video about this in collaboration with Nvidia when it was announced back in 2022.

Since then DLSS has worked to continue to optimise the overall quality of the underlying technology. In fact in the past year or so we’ve seen two iterations of DLSS4 hit the market which includes optimisations to overall image quality, reducing the requirements of the underlying in-engine render, plus an increase to the number of intermediate frames that the Frame Generation technology can provide.

Now all of this stems from a fundamental alignment between the industry and the provider. The games industry continues to push increased graphical fidelity as means to showcase the biggest and best games, but to render at higher resolutions with equally high framerates is computationally expensive, and often beyond the capacity of many end-users GPUs. Hence DLSS was a workaround: allow players to experience the game by instead using an AI supported upscaling process rather than through native rendering. This is particularly useful when running the likes of raytracing for dynamic lighting which significantly increases the workload of the renderer.

And for the most part, the games industry has signed into this unspoken agreement, that AI upscaling was an acceptable workaround for ever increasing hardware costs necessary for big-budget high-fidelity games.

Now while I have problems with the games industry’s obsession with graphics rendering, even I see some of the benefits of using the likes of DLSS in games. It helps enable players with lower power devices to engage with the latest games. It helps titles get ported to the Switch 2 which has an Nvidia GPU. In fact I wrote about this at the time of the Switch 2 launch heralding how important it was both for the future of AI tech used in rendering, but also that it was of sufficient quality that it would be used now as the sole primary means for certain games to be deployed on Nintendo hardware.

But I also have issue with how the industry is beginning to rely on it even more now as means to achieve base performance. The debacle surrounding Borderlands 4’s launch last year - where virtually every base setting required DLSS to be enabled, speaks to an over-reliance on the technology as means to work around the necessary optimisations for a game at launch. Sure, a balance must be struck here, and yes some players hate DLSS - referring to it as ‘fake frames’ - but games like Borderlands 4 are a shining example of going too far in the wrong direction.

Fundamental Misalignment Between Product and Consumer

DLSS5 has broken that unspoken agreement, and it has put Nvidia in an awkward position as they attempt to sell a technology that nobody wants. Never mind that Jensen Huang says players “are completely wrong” about the technology (they’re not). Never mind that they won’t admit to what the tech does (it’s a post-render AI pass). Never mind that it’s currently not even feasible to run it on a single GPU. The most important thing here is that Nvidia has overstepped. Nvidia assumed that they knew better than developers what AI rendering should be doing to support the experience, and they have been burned by it.

It’s a considerable shot in the foot what with DLSS4.5 coming and going without much grief. An overstep as Nvidia now showcases technology that seeks to replace the original artistic intent of a game, running under the assumption that those games simply couldn’t achieve their original vision without Nvidia’s help. Now I know a few people who tried out the demos behind closed doors at GTC, and while some of them felt that the lighting features in DLSS5 could be useful if actually controlled by environment artists, they were universal in saying the faces were awful. Yassification aside, there are significant problems with the eyes being prone to not lining up, or even looking in the same direction. Plus an awkward jitter in the facial movements, and the lighting on the faces often making them look waxy and dirty, or to a point they sometimes have different lighting versus the rest of the scene.

But again we’re still in a space where the big issue is the game is being re-rendered to something that arguably does not fit the original artistic vision. The Grace Ashcroft example in Resident Evil: Requiem is an ever bigger problem when you realise that she’s based on a real person. Grace’s face is based on that of Polish/American model Julia Pratt. While there is naturally going to be some artistic license applied, the face will still be expected to behave in a manner much like Pratt’s given they will use her for expression capture. The whole point of this process is to have a consistent baseline of physical appearance you can build Grace from. It’s not going to help if you then have an AI render sit atop it that won’t guarantee that consistency lest you can actually train the face renderer. But even then, in the game she’s not meant to look like Pratt, but Grace. So even in the best case example it’s a lot of effort that still results in the same problem of a lack of consistency.

Consistency is paramount for these sorts of things. Not just in the game itself, but all of the marketing building off of that. Both Leon and Grace need to be clearly identifiable and consistent so you can run billboards and all sorts of digital advertising such that people begin to recognise them. Marketing 101 here.

Plus, there’s an interesting line here in whether the likeness rights in the contract between Pratt’s agency and Capcom would extend as far as Nvidia fucking around with the face after the fact without either party’s permission?

To add insult to injury, Capcom stepped up to be one of the studios who acknowledged they didn’t know about the DLSS reveal until it happened. Now I do want to slap some cold water on this, given I highly doubt big companies are completely in the dark about Nvidia using their games without permission. Though I suspect they didn’t expect all of this to happen with their games front and centre. They figured Requiem was going to be used to showcase a DLSS demo, not that they would wind up in the centre of a war between AI bros and gamers.

Players Suddenly Realise DLSS is Generative AI (and are not happy)

So I mused about this in part 3 of the Existential Crisis, but it amuses me how Nvidia have dodged many a bullet in recent years surrounding the ‘AI slop’ problem in that DLSS came to be before the term 'Generative AI’ was normalised. The acronym - Deep Learning Super Sampling - highlighted the use of super-resolution convolutional neural networks for interpretation and reconstruction of images. It is essentially using the precursor to modern Generative AI techniques.

Now considering DLSS has, since version 4, used transformer networks - which are the standard for Generative AI - it is without a doubt a Generative AI technology, and has been since inception. But it felt like it snuck under the radar of consumer ire for the most part until this DLSS5 debacle, because it highlights all the problems and issues consumers have with AI slop.

And boy did they pick the wrong franchise to throw under the bus in the process. Resident Evil at this point is an institution. It has gone through highs and lows for sure, but one could argue it’s never been more popular than now, and this 30 year old franchise has unwittingly victim that ‘the gamers’ will rally behind.

I mean sure, none of this is going to impact Nvidia’s bottom line right now, but to me this is a pretty fundamental misstep that the gaming audiences will remember. The point where Nvidia truly showed their colours as an AI company that will flog their slop-building tech to the detriment of the artistry of games. I mean, this has been true for at least a year or so now, but at this point people might be thinking more about how AMD’s GPUs are a more viable alternative in their next PC build - especially given those are the GPUs being used in current and next generation consoles by Xbox and PlayStation.

And on that note, my open thought is what impact this will have on everyone else? Be it AMD’s FSR4, to PlayStation’s PSSR - both of which are often described as ‘Machine Learning-based upscaling’. Isn’t it interesting that they avoid referring to it as Generative AI? Even though that’s exactly what both of them are, for fear of consumer backlash?

Either way, this is not going to help in the long run with growing apathy and downturn in gaming spend at this time. If it’s becoming all the more obvious that the hobby they have enjoyed as it stands is going to be messed with as means to promote slop engines.

This will all blow over in the coming weeks, but it won’t be forgotten. I genuinely think this is will prove to be an inflection point that we will return to in the coming years.

Wrapping Up

Short and sweet this week - I mean it’s about 1/4 of last week’s edition so yeah, I figured we should keep this one tight.

Next week, we should start having 2025’s conference talks online, plus a few other details for our premium subscribers! So I’ll see you then.