Next Level: A Warm and Encouraging Introduction to Procedural Generation | 06/05/26

I review the new pop-science book from PCG expert Dr Mike Cook

The AI and Games Newsletter brings concise and informative discussion on artificial intelligence for video games each and every week. Including industry news, innovative research, emerging trends, and our own exclusive editorial and reporting.

You can subscribe to and support AI and Games, with weekly editions appearing in your inbox. If you’d like to work with us on your own games projects, please check out our consulting services. To sponsor, please visit the dedicated sponsorship page.

Greetings one and all and welcome to this week’s edition of AI and Games. For this week we’re digging into the world of procedural content generation with the release of Next Level by prominent games researcher Mike Cook. But before we do that we have more conference talks, and a round-up of last weeks news!

Follow AI and Games on: BlueSky | YouTube | LinkedIn | TikTok

More AI and Games Conference 2025

A catchup on the latest talks dropping from last years conference, courtesy of our presenting partners Xsolla!

Design for Everything in Kingdom Come: Deliverance II

Vadim Petrov, Warhorse Studios

Winner of the 2026 BAFTA for best narrative, Kingdom Come: Deliverance II is home to a highly complex simulation of non-player characters living their lives in 15th century Bohemia. In the first of two talks on the subject from developers Warhorse Studios, we dig into the challenges of building complex sensory systems for characters expected to react within complex agent simulations.

No API, No Problem: Deploying Tiny, Fast, Fine-Tuned Models Offline

Keith Lia, Kristian Dam Pedersen, Raw Power Labs

The second talk for this week comes courtesy of our associate sponsors Raw Power Labs. Deploying small large language models has become a huge talking point in the industry, but building something bespoke for a particular problem and then deploying it locally on-device is a huge challenge. Keith and Kristian dig into how to build these systems and the challenges faced in fine-tuning, finding appropriate datasets, and working around expected performance budgets.

AI (and Games) in the News

A round-up of some of the headlines from the past week that caught my attention:

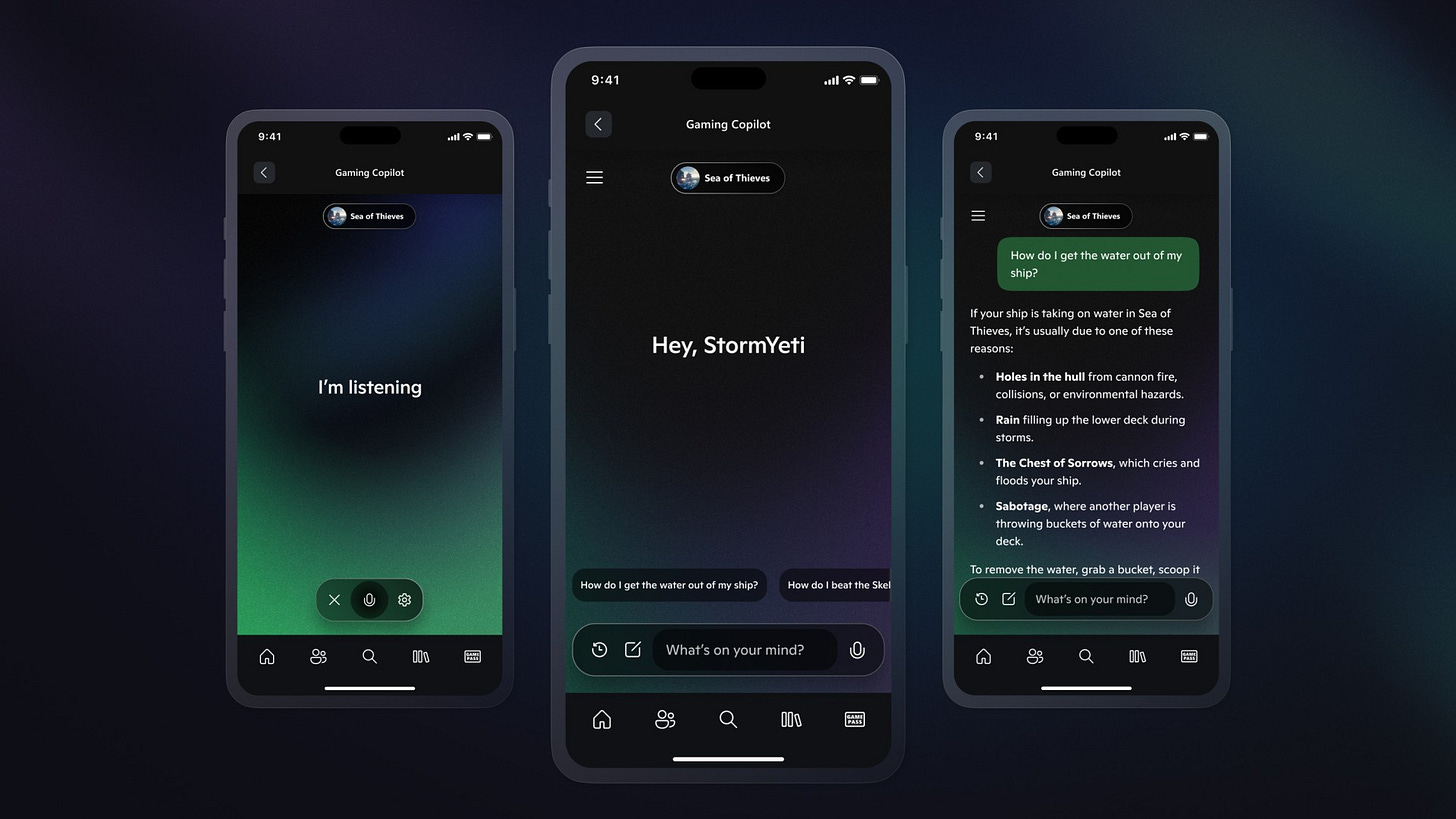

Xbox Staffs Up From CoreAI Team and Ditches Copilot in Refresh [CNBC]

Our main story last issue was the internal memo sent around Xbox by new head Asha Sharma that read more like an effort to score brownie points from low hanging fruit than any meaningful assessment of the direction of the platform. As I pointed out at the time, right now the sentiment towards Xbox is poor, and whatever steps taken to mitigate the impact AI will have on that needs to be taken carefully - though most of their issues stem from the parent company developing tools and services nobody wants.

Well this led to two stories this week that sort of exist on either side of the coin. First we have [via Kotaku] that Microsoft Copilot for Xbox is being discontinued. I’ve talked about this in previous issues, but the idea was for it to be a companion app you can engage with to help you with how to configure and play your games. You may have missed it that the PC version of Xbox Copilot launched last year - nobody cared. Now they’re ceasing development on the mobile and console versions. The only surprise here is that it’s been discontinued so soon. It was a tool that never struck me as meaningful for players, and existed largely to regurgitate information available within Xbox API’s, or simply cannibalise the hard work of the audience behind these games for their own gain. Scraping information from guides, reddit posts, news articles and much more besides to deliver it in a sub-optimal fashion.

So hey, another low-hanging fruit grabbed by Sharma and her team, and it’s the team that’s the other story. As reported from an internal memo later shared by CNBC Sharma is stacking the deck with four members of the leadership team from Microsoft’s CoreAI among others. With four promotions from within Xbox and two resignations.

Is this a power grab for the Xbox division from CoreAI? This reads to me from the top that we’re going to see a pivot in the design, growth and delivery of management of Xbox by trying to treat the games pipeline like AI product development. You have decades of experience entering a sector with zero experience of game development, like Sharma herself.

Once again, lots of changes, yet right now nothing that convinces me this is going to prove effective.

Blender Backpedal on Anthropic Partnership, But Keep The Money [Blender]

Last week we highlighted that Blender were receiving a lot of flack from users after they opted to have AI company Anthropic join the Blender Development Fund as a corporate donor. This suggests all sorts of sketchy things given an AI company is investing in a 3D modelling tool - a great opportunity to get insight into training and a potential avenue to explore their own work in the space. Unsurprisingly - and it’s never a surprise, let’s be honest - users of Blender were far from amused. In a statement put out at the weekend Blender have announced that they were very surprised by this (uh-huh), and that they need to do more to address how AI intersects with the organisation.

They’re keeping the money though. It’s now a one-off donation. Yeah, I suspect little has changed behind the scenes.

Warhorse Studios Reddit AMA Comes Under Fire Over AI Policies [Reddit]

In a recent issue we reported on the how the English voiceover director for Warhorse Studios, Max Hejtmánek, was reportedly let go as it was made clear to him his role was now redundant in an age of Generative AI. Well, the studio’s discipline leads took to r/gaming for an AMA on Reddit and they got appropriately rinsed for this decision. The questions about AI disrupting the studios processes began to derail the QnA as people asked whether their “Gemini premium subscription will translate the next game”, or if they planned to replace the creative directors with AI. It concluded with the following statement from the studio:

”We hear you and your concerns. Hopefully this explains the situation a bit. We do not see AI as a substitute for human work, and we are currently looking to expand the company, including our translation team. Some team members find AI useful during early stages of production. However, we do not use AI-generated content in the final game and we have no plans to change this in the future. Thanks for being with us today”

This rather flies in the face of the situation we raised from the end of March. No clarification was given to the firing of Hejtmánek, and I doubt anyone was convinced by their responses.

Roblox’s New GenAI Stack is Stadia and DLSS5 Smashed Into One [Roblox]

Last week Roblox showcased the latest bit of Generative AI work coming out of their research labs. After all as we’ve reported before Roblix does A LOT of stuff in and around the AI space. But this one well… it would be a fresh new layer of horrid were it not for the fact that Stadia suggested the same bad idea years ago.

As reported in an blog post by Senior VP of Engineering Anupam Singh, ‘Roblox Reality’ is a hybrid architecture where a video generation layer attempts to re-render the existing game by gathering geometric data from the game engine and then using that to re-render the existing game. Allowing for game developers to access “unprecedented visual fidelity and motion on top of traditional persistence and structure, without increasing development costs”.

Now they say it doesn’t increase development costs, but then later in the same post acknowledge that maintaining this architecture - which is rendering video directly to the player from the ‘video world model’ that they’ve built “is currently cost-intensive, and achieving high-fidelity, real-time performance, such as 2K resolution at 60 Hz, remains a development challenge.” Never mind the fact that it wouldn’t guarantee visual coherence over long play sessions or in multiplayer.

I mean, look… wearing my AI hat this is an impressive bit of work. But for a game it’s a rather pointless exercise, unless your Roblox. In theory this could lead to a future where Roblox games are streamed as a video rather than as games running on the platform - thus fully transitioning Roblox into a cloud distribution system. But also it means once again that developers have less control of the visuals of the game given it’s now in control of an AI model that frankly looks awful. Bear in mind in the video above, the top left is what it actually looks like in Roblox, whereas the bottom-left is what their AI is achieving - the bottom-right is a visualisation of what they hope it will look like. Either way, it’s rough.

And yeah, Stadia already pitched AI rendering for an online game platform in what… 2019? Back then they were suggesting style transfer via deep learning to change how your game rendered, and then nothing came of it because, well… Stadia.

Well, at least it isn’t DLSS5. Oh yeah…

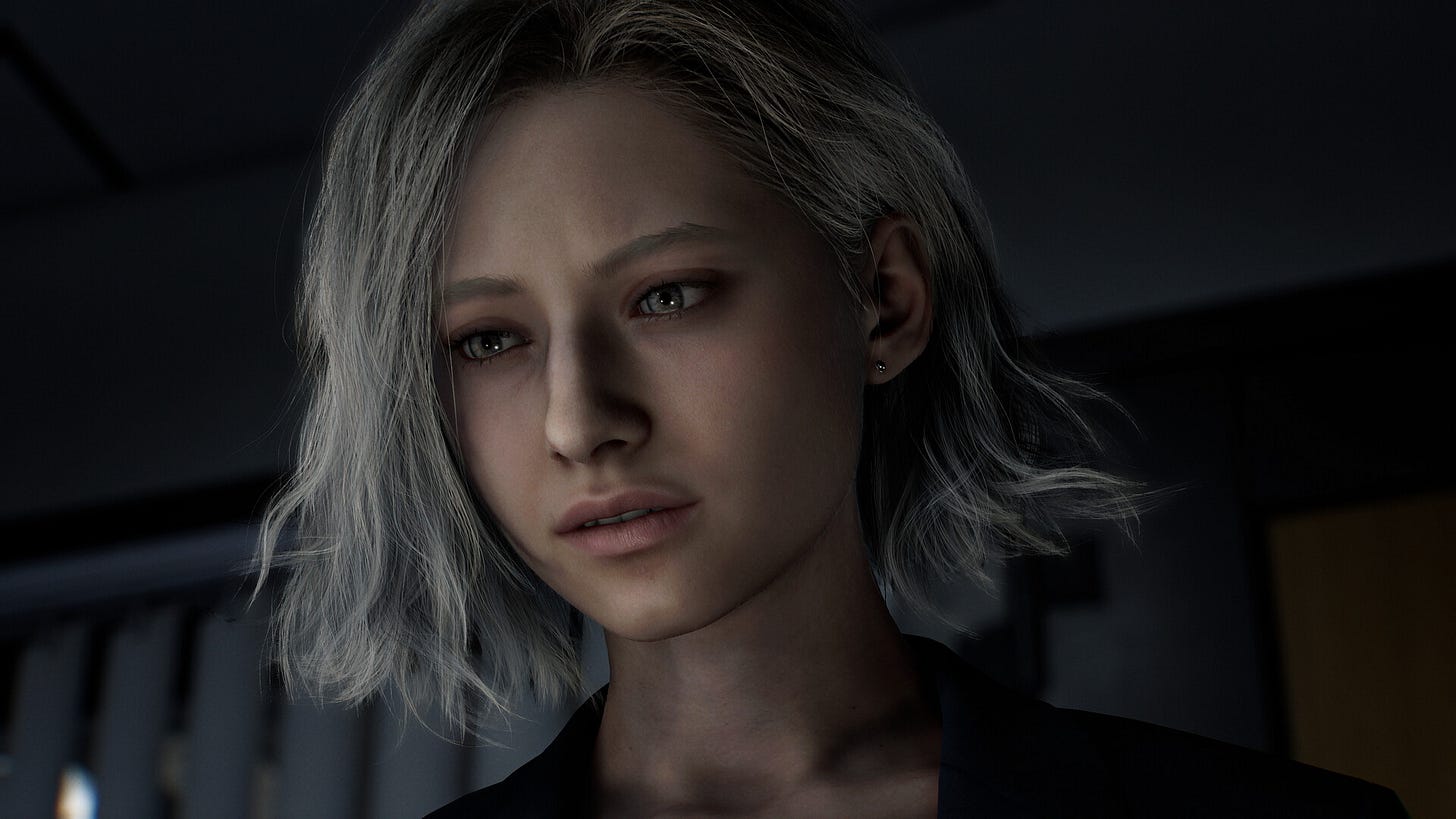

RE: Requiem Director Dumps on That DLSS5 Demo [Eurogamer]

In a sitdown interview with Matt Wales at Eurogamer, Resident Evil: Requiem director Koshi Nakanishi was asked about the debacle courtesy of the DLSS5 demo that portrayed Requiem’s protagonist Grace Ashcroft as an Instagram model. While Nakanishi-san avoided commenting specifically on their partnership with Nvidia, or the fact they apparently didn’t know it was going to happen, he did comment on the audience reaction, and how they’re rather vindicated in their original design:

"the fact a lot of players commented they really liked the original design of Grace and didn't want to see it changed was a positive... It meant we got the design right [and] points to the fact that Grace quickly established herself as a fan favourite, that people had such strong opinions on her design."

Something something, artistic direction is key, something something…

Unity Announces Their New AI Features, Again [Unity]

For the third time in as many years, Unity has announced their new suite of AI assistance features. Advertised around the principles of ‘Ask’, ‘Agent’ and ‘Plan’: ask for help with the built-in assistant, have an agent create assets, make changes or update settings, or use an agent to generate plans to support you in your game development process. Long story short, it’s a a new collection of Generative AI models designed to support users in building their games.

This is a paid-for service, with 1000 monthly credits for Unity AI agents provided as part of a Unity Personal license, you can double that to 2,000 if you’re happy to pay the eye-watering $210 per-month pro license. It was announced on Monday with a promotional video that shows absolutely none of it in action.

We’ve had Unity drop new features like this on the regular only to abandon or reshape them. This is the latest version essentially of what was Unity Muse, first launched in 2023 and then bundled into Unity AI in 2025. Though now more of an effort has been made to integrate it into the engine as a package (it doesn’t come pre-installed as part of Unity 2026).

Unsurprisingly the AI dude-bro fans on LinkedIn and Twitter are excited about finally executing on “that game idea” they’ve had for several years and somehow are unable to execute. Meanwhile the regular Unity game developers out there have been far from thrilled. If you thought Steam had a slop problem before, oh boy… lets give it six months.

NEXT LEVEL: Making Games that Make Themselves

Mike Cook / Bloomsbury / Out May 7th

In 2026, if I were to say we’re going to review a book about ‘generative design in video games’, your first instinct would be to think Generative AI. It has become such a dominating conversation in the industry, and of course this here publication, that it’s easy to forget that the vast majority of game development is simply uninterested. For many designers when we are thinking about generative design, it’s of algorithm design, and of how specific formalisms and phenomena can be used to translate ideas into gameplay. Procedural content generation, of making things that make other things, continues to be a prominent and powerful aspect in the game development community. Most notably in hobbyist and indie spaces as people build everything from eclectic demos to fully-fledged commercial titles. Or in the case of many of the games in this book such as Spelunky, Minecraft, Dwarf Fortress, and Caves of Qud: an eclectic demo that became a genre-defining breakout success.

‘Next Level: Making Games That Make Themselves’ is a new book by Dr Mike Cook - a senior lecturer in computer science at King’s College London, and researcher in procedural content generation and automated game design. Published by Bloomsbury’s pop-science Sigma imprint, the book aims to provide an accessible overview to procedural generation as a concept, and how it has been employed in a variety of games in the industry.

Now of course it’s important I contextualise this review by stating I have known Mike both professionally and personally for over 10 years, and we have collaborated on a variety of projects over the years. But this book took me by surprise. I didn’t know he was working on it and was excited to discover it was launching but a month before another friend of the newsletter George Osborn releases ‘Power Play’, his book on politics within video games. It’s a good time to read my friends books it seems!

Cook sets out to take on a rather ambitious task: to conceptualise and define procedural design for the newcomer, but without an emphasis on mathematics and code. Sure, there’s a bit of math in there given there’s a need to discuss how randomness in generative design is achieved, corralled, and to an extent controlled. But the ambition is to provide a more accessible entry point for people without a background in computer science and game design, and highlight how many of the themes and concepts that emerge in the field are cyclical, reinforcing one another as new algorithms and design philosophies take shape.

For around 70% of the book, the focus is on introducing ideas, and reinforcing them with real world context - something that resonates strongly with my work for sure. Cook introduces ideas such as the random sampling of numbers and events and how this translates into video game design. How dice and tarot decks provide structure and form to randomness, and how these ideas translate into the galaxy generation of Elite or the dungeon generation of Rogue. But far from sticking to the PCG heyday of the 1980s, it doubles down on how generative grammars and template-driven algorithms are the crux of the level construction of indie-darling Spelunky. That game that really kickstarted the modern roguelike trend in contemporary indie gaming.

It’s a difficult needle to thread as the book weaves from die-rolls to Perlin noise in Minecraft and fully-fledged randomly-generated simulations in the likes of Dwarf Fortress. But with a combination of grounded explanations and Cook’s self-effacing sense of humour helps maintain the momentum as you get a crash course in generative design in its many shapes. It’s also been a staunch reminder to me that I had half of these games in the backlog for my Artifacts video series, and I really need to get around to making them at some point…

The closing chapters of the book reflect a little more on the present and the future of the field. After all, a book on generative design in 2026 that makes no mention of Generative AI might seem strange. But much like my work in exploring how AI is typically used in games, Generative AI once again provides little of substance. Cook does a good job of breaking down that while many Generative AI advocates insist LLMs and image generators are the solution to many of the challenges faced in game design, in truth they rob creators of the very structured randomness that all of the previous games explored in the book benefitted from. An unwieldy solution to a series of problems more effectively achieved through a concise and reliable set of algorithms. This is followed with a bit more of an insight into the academic world of PCG and how research has evolved in the space in the past 20 years into something that has formed its own community. As a former researcher who published at these events, and even helped run a couple of them, it was a nice to be able to revisit many of these ideas in a publication that hopefully receive a lot more attention in the public eye than the research papers it references.

As it came to a close it sits as both an interesting overview of many of the techniques used in PCG in games, but also gives insight into Cook as an educator. I have come to know the author more for his research - and late night chats at conferences - rather than as a teacher. So to see him take charge to try and explain the world of generative design from first principles for newcomers was rather exciting. The book also provides references to key texts, source code, research papers, developer talks and more to help give you further avenues to explore once you’ve reached the end. Selfishly I’ll admit that I was also taking notes on how to pull this off, given I’ve had aspirations to write my own book having penned the 60+ page Brief History of Game AI for the upcoming Goal State: Game AI 101 course. One day… gotta finish the course first.

As with any subject matter of this depth, the end of the book is but the start of the journey. If you’ve ever wondered how procedural generation works in games but lack the background to understand much of its underpinnings, Next Level is a great introduction to a subject matter with no clear throughline. But even for the more seasoned of us, it casts a fun path through the space where you’re still bound to learn something new.

Next Level is available from Bloomsbury, Amazon, and other book retailers from May 7th. A review copy was provided courtesy of Bloomsbury.